Doi:

Abstract:

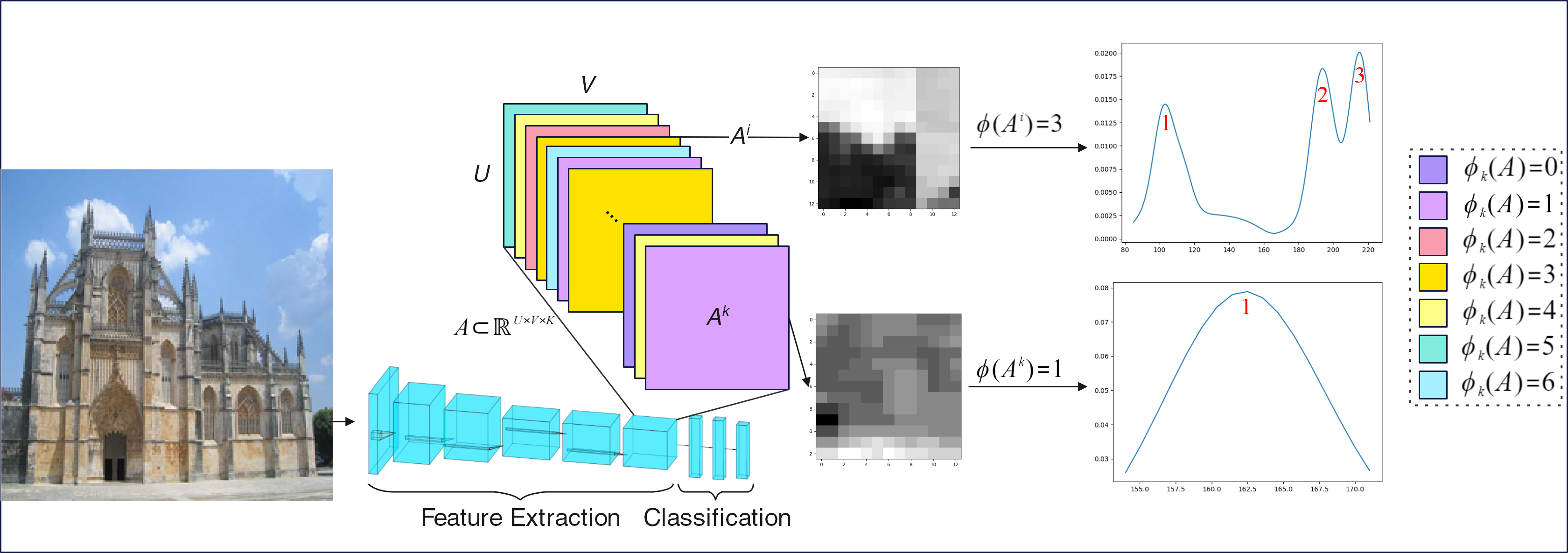

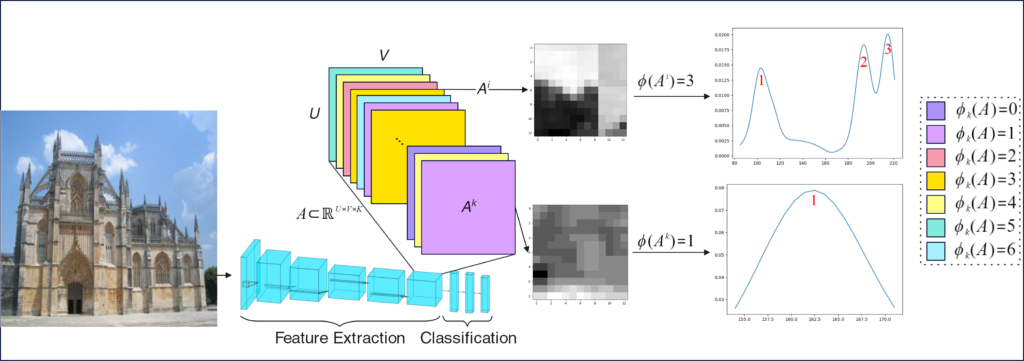

To equip Convolutional Neural Networks (CNNs) with explainability, it is essential to interpret how opaque models make specific decisions, understand what causes the errors, improve the architecture design, and identify unethical biases in the classifiers. This paper introduces ADVISE, a new explainability method that quantifies and leverages the relevance of each unit of the feature map to provide better visual explanations. To this end, we propose using adaptive bandwidth kernel density estimation to assign a relevance score to each unit of the feature map with respect to the predicted class. We also propose an evaluation protocol to quantitatively assess the visual explainability of CNN models. Our extensive evaluation of ADVISE in image classification tasks using pretrained AlexNet, VGG16, ResNet50, and Xception models on ImageNet shows that our method outperforms other visual explainable methods in quantifying feature-relevance and visual explainability while maintaining competitive time complexity. Our experiments further show that ADVISE fulfils the sensitivity and implementation independence axioms while passing the sanity checks. The implementation is accessible for reproducibility purposes at https://github.com/dehshibi/ADVISE.